Scaling a governance model

2025 · Credo AI

The problem

As AI adoption scaled, teams registered the same tools repeatedly under slightly different use cases. Each instance required separate review, ownership, and maintenance—fragmenting context and multiplying governance effort without improving oversight. What looked like growth was actually duplication.

Privacy and Permissions

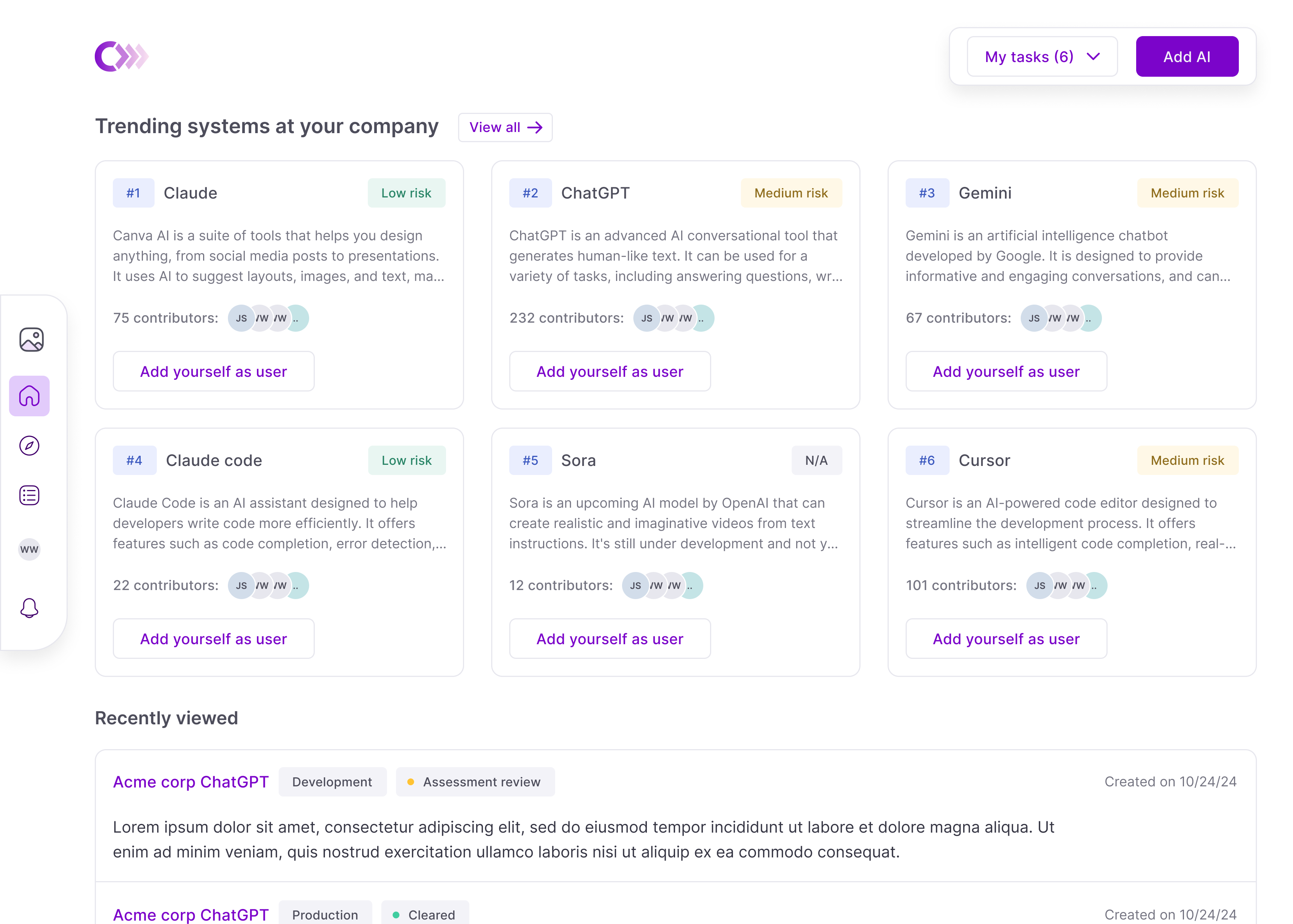

Use cases were originally designed to be only visible to owners, admins, and selected contributors. This strict one-size-fits-all approach worked in 2023 when enterprises were building their own tools, but as usage shifted to applications and APIs, a public setting was needed to prevent duplication.

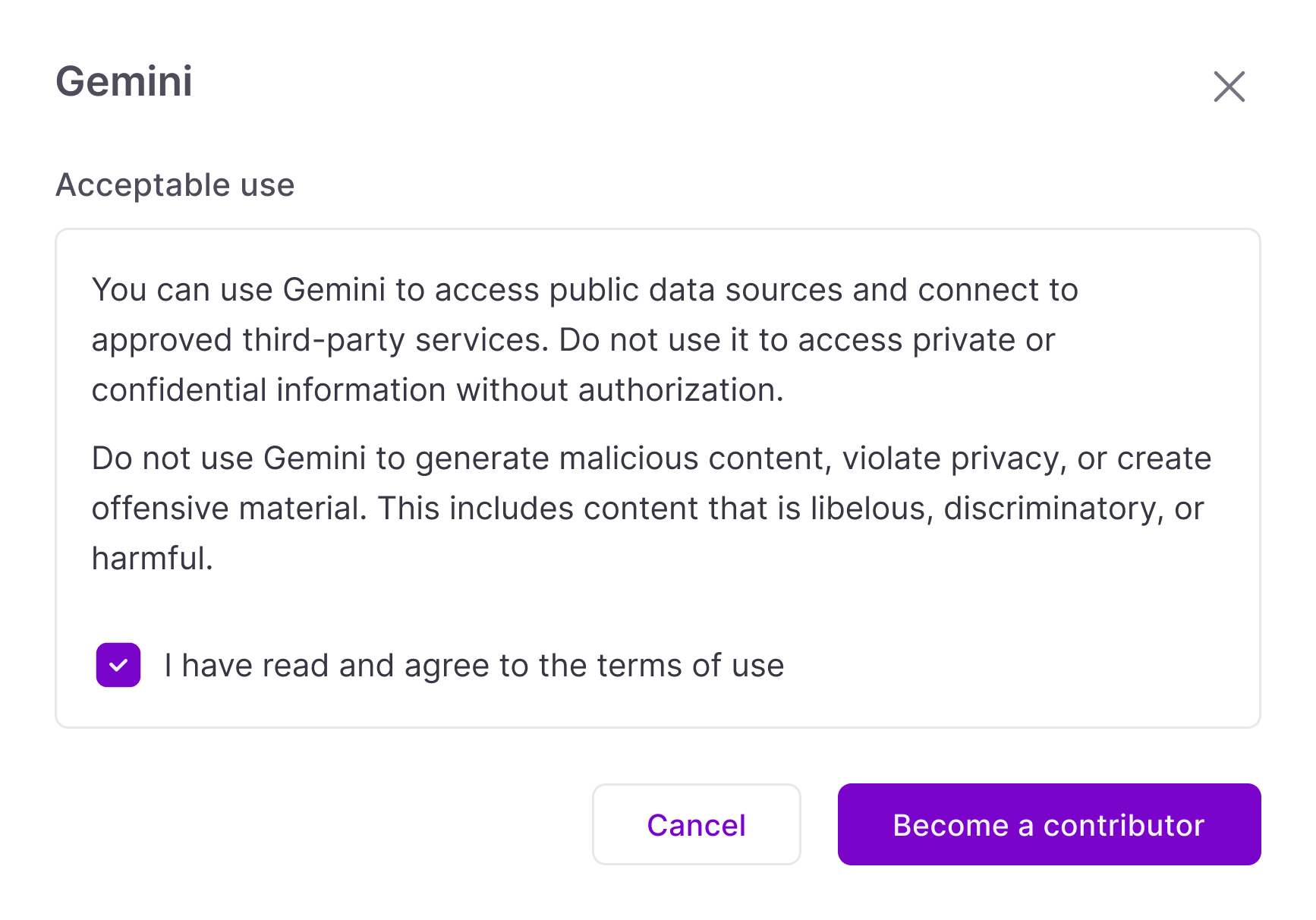

The goal of public AI usage in Credo AI was not only for visibility, but also to enable users to accept governance terms and add themselves to existing systems.

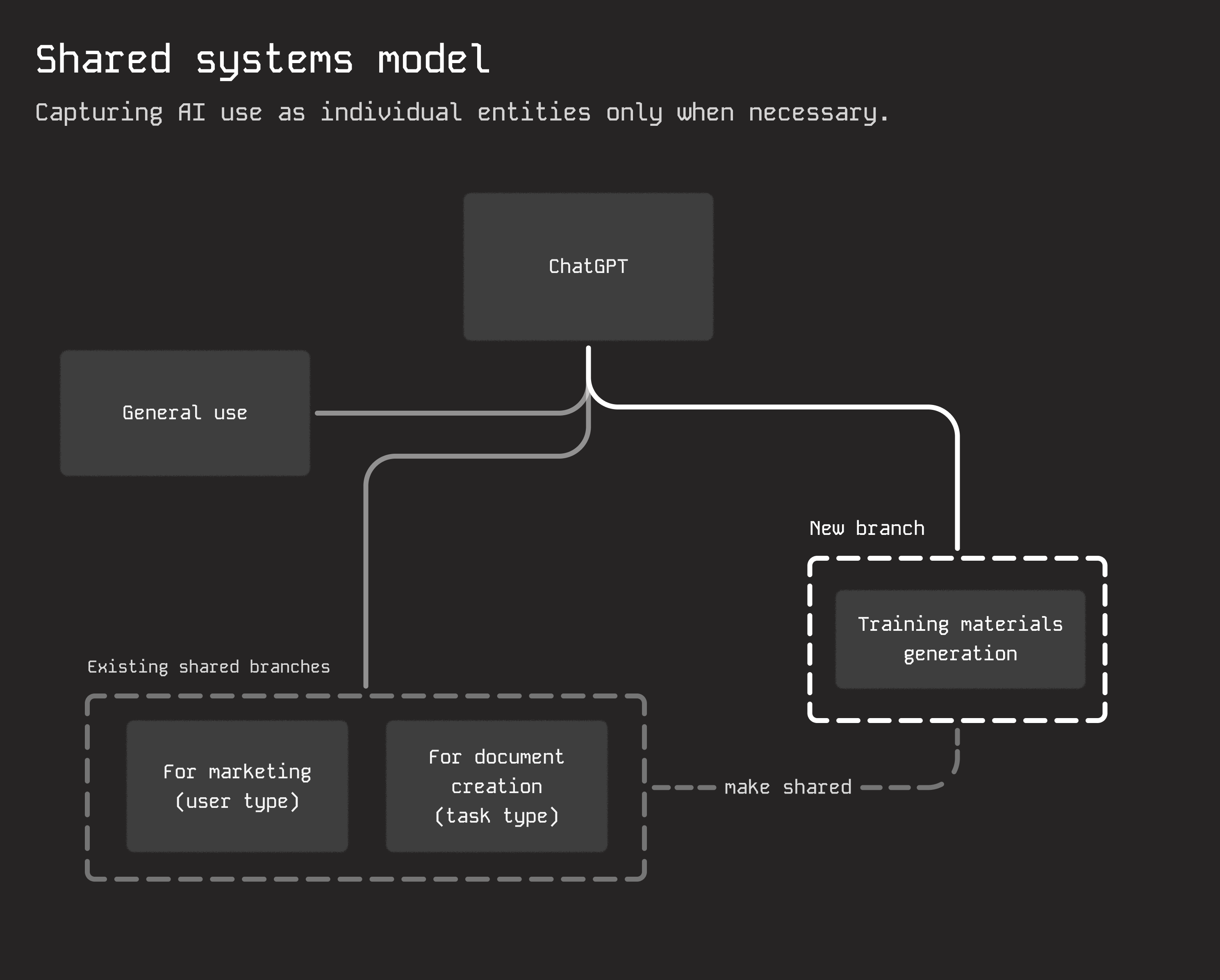

Scaling the model

Acceptable use converged broad, low-risk AI usage into a single governed system, collapsing duplication and establishing a baseline for oversight. The system can branch: shared systems extend into usage-specific sub-entities with their own policies and constraints. This preserves centralized governance for common patterns while allowing specialized usage to evolve independently—introducing private use cases only when necessary.

Duplication Prevention

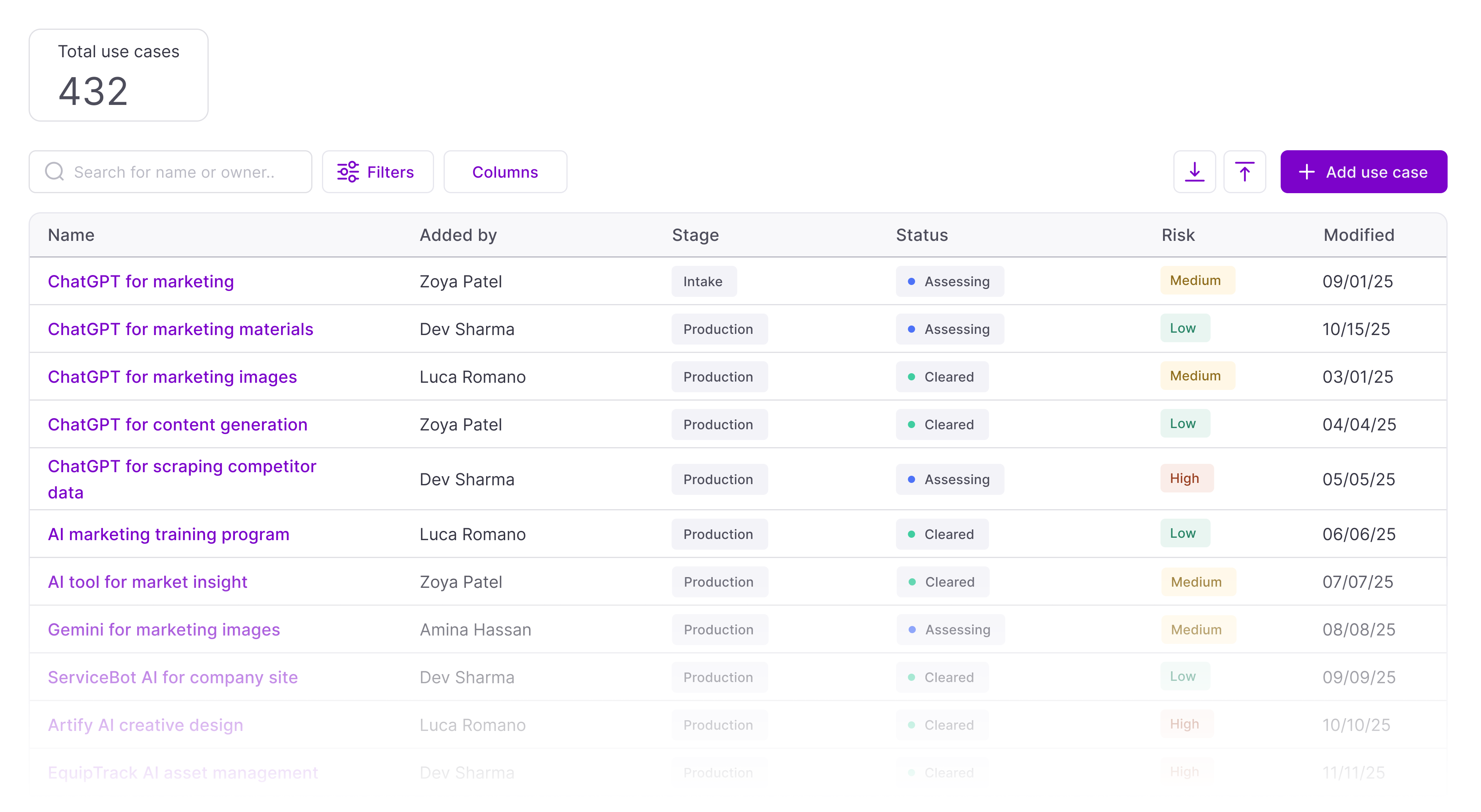

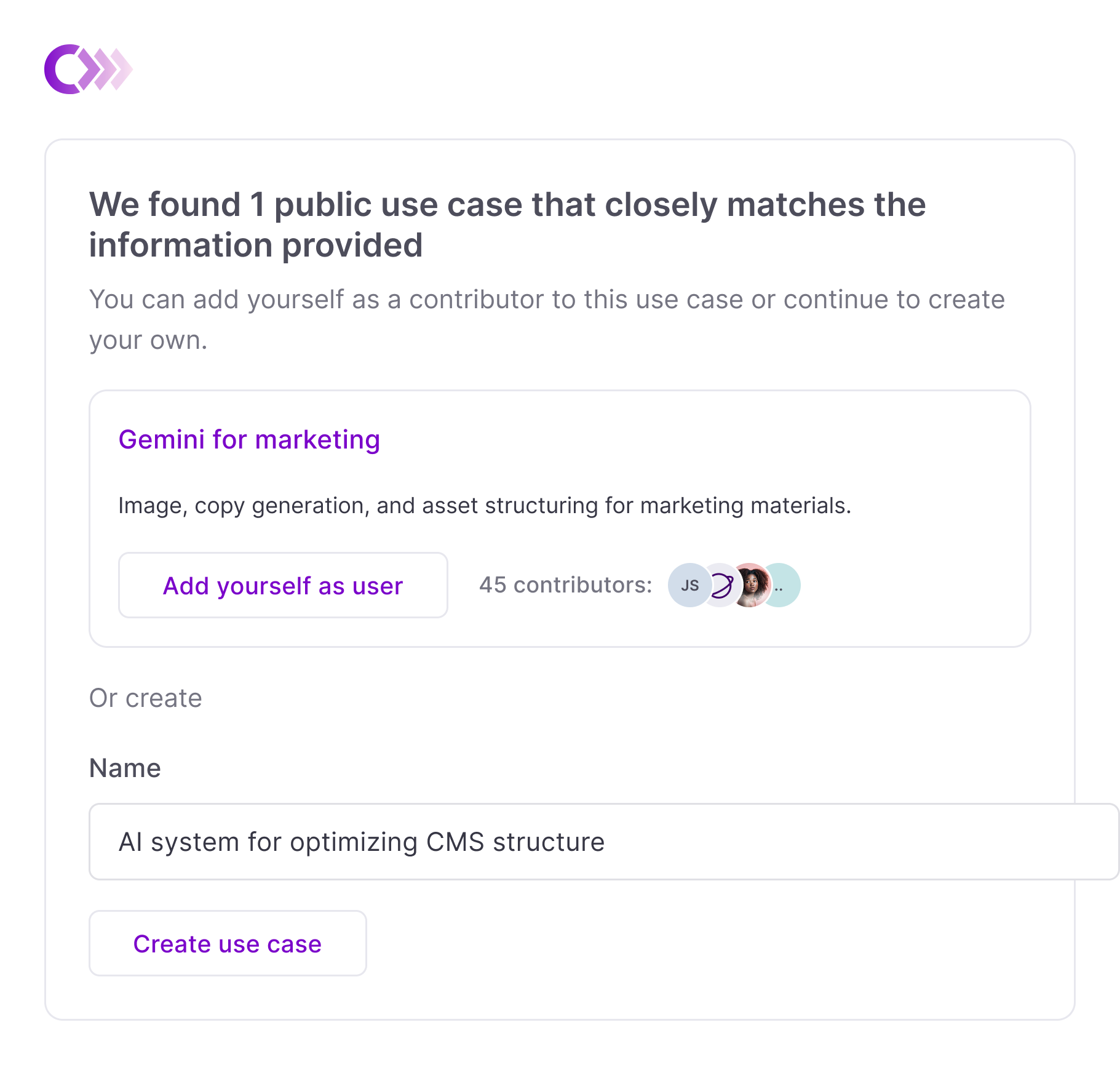

Discovery builds awareness, but prevention happens at registration. The AI-assisted flow evaluates user intent and surfaces existing systems with overlapping purpose, prompting an explicit choice: join an existing system or explain why a new use case is needed. This turns duplication from an accidental outcome into a deliberate decision, preventing fragmentation while still allowing warranted exceptions.

Impact

Public use cases and acceptable use has been effective at limiting the creation of duplicate entities. However, the main benefit of the framework is forward looking.

I partnered with governance leads and our advisory team to design this structure around how governance leads want to work moving forward.

As of writing we have shipped public use cases, acceptable use, and duplication prevention; but the structure around branching is still in progress.