Translating intent into governance

2025 · Credo AI

Overview

Credo AI helps enterprise teams safely adopt and govern AI at scale. I led the design of AI Assist, our first foray into AI-forward governance workflows.

The project lasted several months, and had an instant impact. Registration processes that previously took weeks of coordination were now starting to get done in a single session.

The Problem

As AI usage scales: manual registration forms create bottlenecks: users struggle with terminology, submissions lack consistency, and governance teams spend time interpreting rather than evaluating risk.

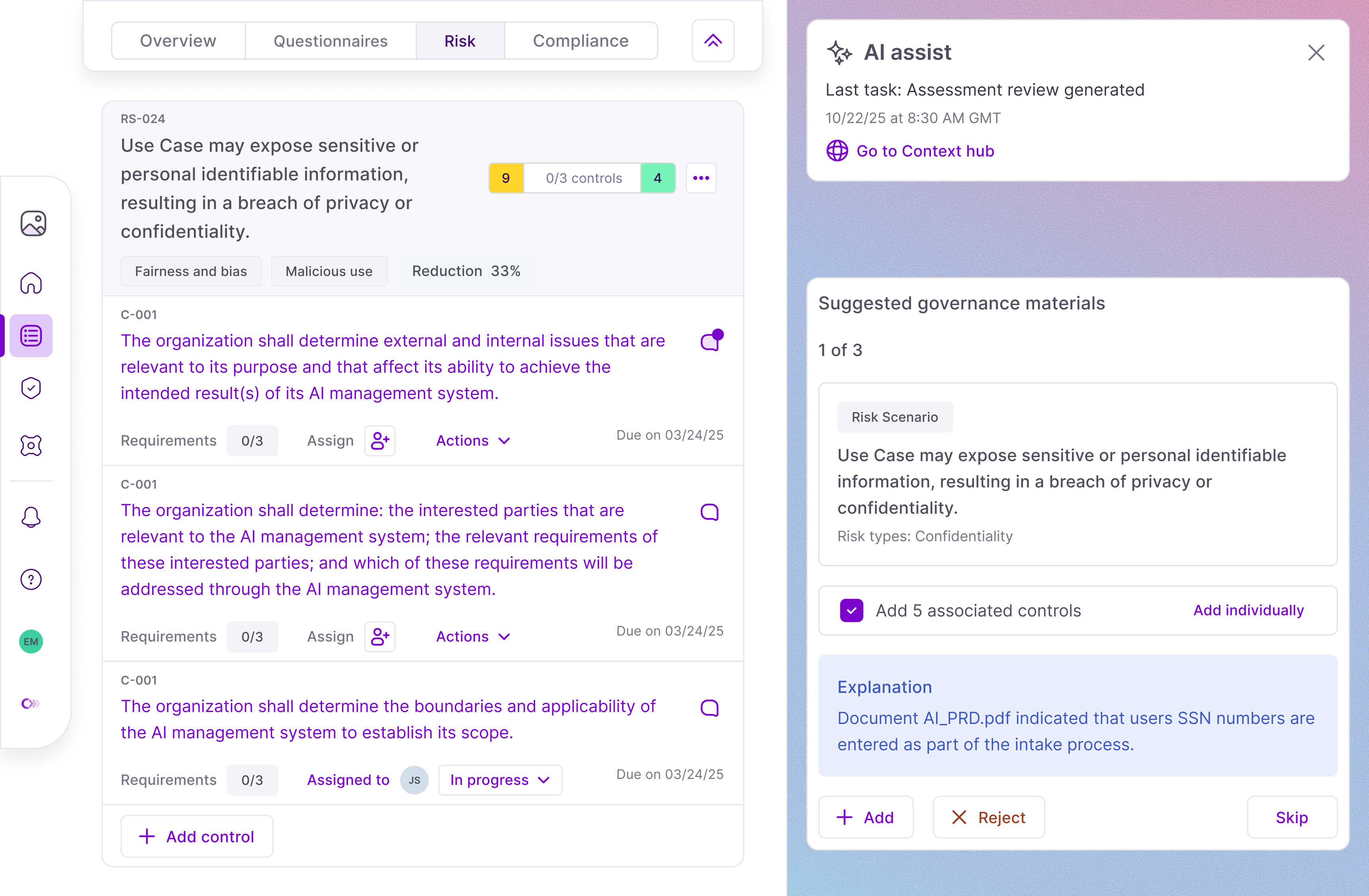

I designed AI Assist to bridge this gap by structuring intent—translating natural language into explicit signals, surfacing assumptions, and highlighting uncertainty—without automating approval decisions.

Earning trust

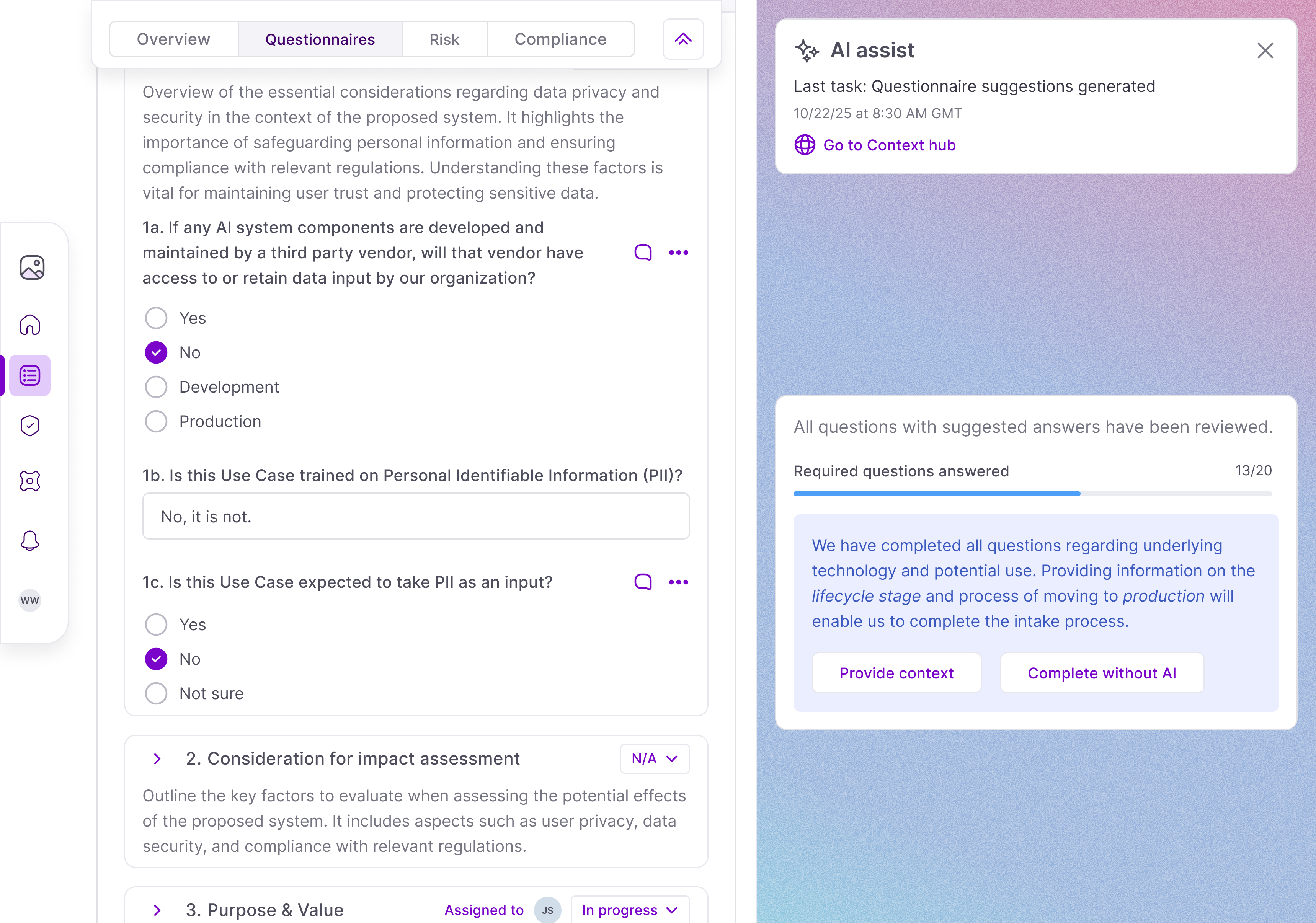

The core value of AI Assist is being able to take limited information and fill out lengthy compliance forms. Before setting out on that longer-running task we created a step that verifies the initial prompt and asks up to two follow-up questions.

This not only helped ensure that non-technical prompts had sufficient context, but was the first step in gaining user trust by showing that the AI was listening to the inputs and not acting without proper guidance.

Tuning Performance

Earning trust is not only about UI patterns, but also the behavior underneath. I prototyped claim separation, confidence gating, and two-call verification; however, for answering long forms, these methods were all ineffective.

As a result, AI Assist is purely guided by a series of prompts and contracts before the higher stakes tasks of risk evaluation (later in the governance lifecycle).

This represented some serious risk, and impacted how we controlled the AI behavior (defaulting to only suggest instead of apply for fields that have not been thoroughly evaluated).

Successes, Failures, and Learnings

The model performed much better than expected. With limited context, we were able to immediately see use cases getting filled faster, and with higher levels of detail. This feature was also a key differentiator for us to share our vision to potential partners, enterprises, and funders.

But there were plenty of bumps along the way. Isolating parts of the flow allowed us to test and tweak; however, it masked some of the speed issues we were having, meaning some UI details were unable to be ironed out much before release.

Reflection

This project was straightforward in the way that users were already clamoring for this type of feature (the business users do not want to spend time on AI governance and governance leads do not want bottlenecks in their processes).

But it will not be long until a fully agentic workflow is realized, with governance leads only using Credo AI for design and monitoring of processes and agents.

In my mind, it will take a lot of layers of business logic and design to get there. But as I learned here, the models might be further ahead than I thought.